Eyespy

tl;dr

Client

This project was supported by the Creative Europe project – The People’s Smart Sculpture and the Gdansk City Gallery.

This project was supported by the Creative Europe project – The People’s Smart Sculpture and the Gdansk City Gallery.

Role

Independent design research exploration supported by a Creative Europe project.

Concept Design • Material Exploration • High-Fidelity Prototyping (3D Printing & Code) • Visual Design • Stable On-site Installation Setup

Timeframe

June 2016 – October 2016

Brief

To provoke reflection on the commonplace and yet mostly invisible presence of smart surveillance cameras in everyday lives.

What do smart cameras capture, stream, interpret, and share about us? How and when do they go wrong?

What are the possibilities that machine learning presents to interaction designers as a design material?

Outcome

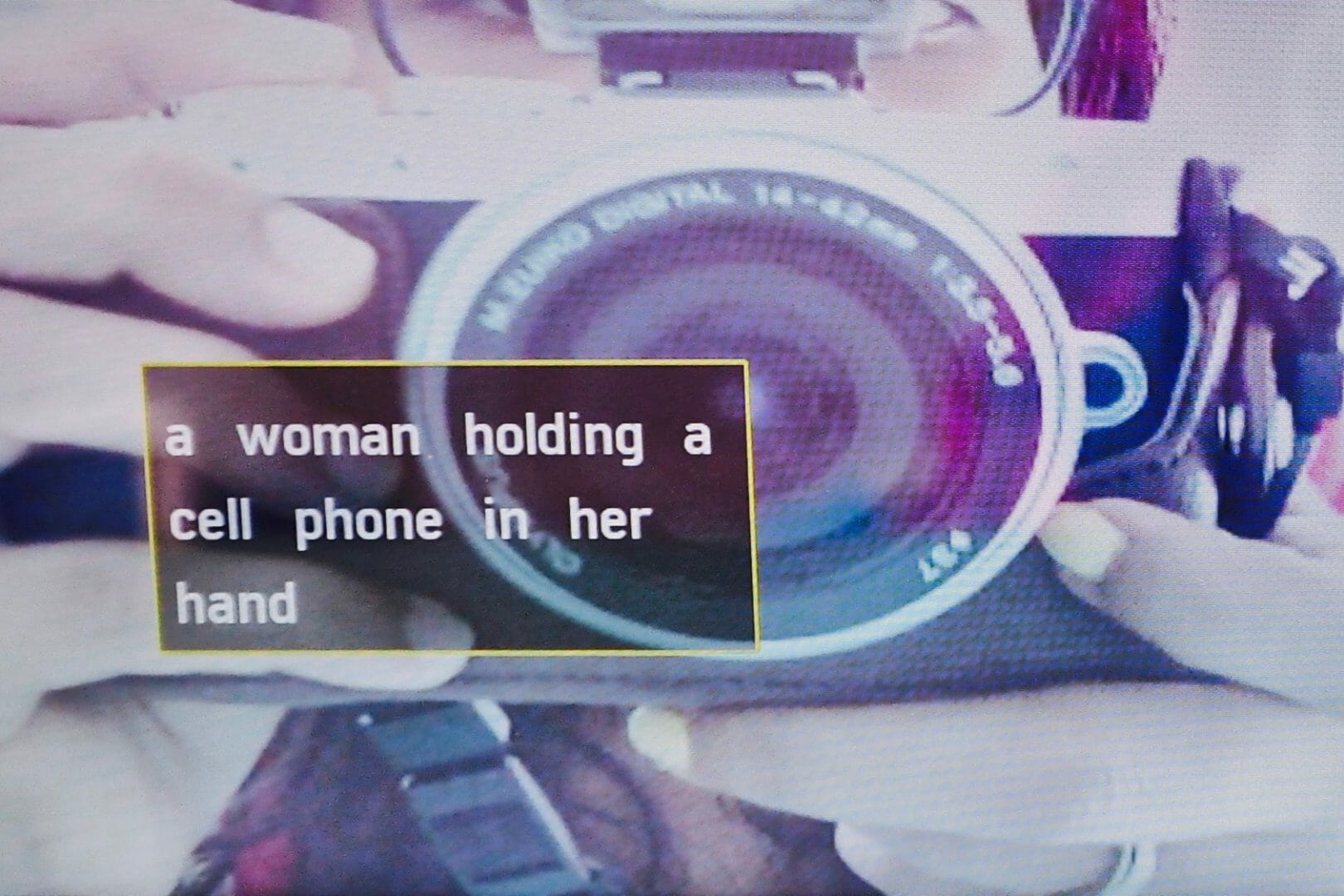

Eyespy is a smart surveillance camera that cannot monitor, identify, and communicate potential threats to the security of lived spaces and the safety of loved ones. Instead, it shows a combined live feed from surveillance cameras located across the world, and a local camera pointed at the viewer. A description of the feed is also overlaid on top of it.

Eyespy was exhibited thrice, once in a cultural festival held in Gdansk, Poland and twice at maker-faires in Oslo, Norway.

“In an era where artificial intelligence is beginning to converge with surveillance […] how can we begin to understand the data these networks might soon be processing about us? What is the quality, not just quantity of the information they're recording?”

– Brian Merchant (The Sentient Surveillance Camera)

“In an era where artificial intelligence is beginning to converge with surveillance […] how can we begin to understand the data these networks might soon be processing about us? What is the quality, not just quantity of the information they're recording?”

– Brian Merchant (The Sentient Surveillance Camera)

Surveillance cameras are so commonplace in cities that they have become an almost invisible part of urban life. Smart surveillance cameras don't just passively capture and transmit, but actively identify, interpret, understand, and act on the captured visual data. The resulting information can be put to different kinds of use, from predictive policing to cashier-free stores.

With this project, I wanted to try and get the visitors of the installation to reflect and think about the widespread yet invisible presence of smart surveillance cameras. I believed that by making the invisible visible and understandable, the project could inspire people to think about our relations with these technologies that continuously capture, stream, and interpret our activities and interactions.

Brief

Enabling reflection on the widespread yet invisible presence of smart surveillance cameras in everyday lives.

Surveillance cameras are so commonplace in cities that they have become an almost invisible part of urban life. Smart surveillance cameras don't just passively capture and transmit, but actively identify, interpret, understand, and act on the captured visual data. The resulting information can be put to different kinds of use, from predictive policing to cashier-free stores. I believed that by making the invisible visible and understandable, the project could inspire people to think about our relations with these technologies that continuously capture, stream, and interpret our activities and interactions.

What I did

Exploration

Concept Design

Material Exploration

Speculative Exploration

Technical Development

Design

Prototyping

Fabrication

Visual Design

Installation Design

Role: interaction design researcher (Contract)

The project was a part of my work with the People's Smart Sculpture project and was co-financed by the Gdansk City Gallery. I worked independently on this project.

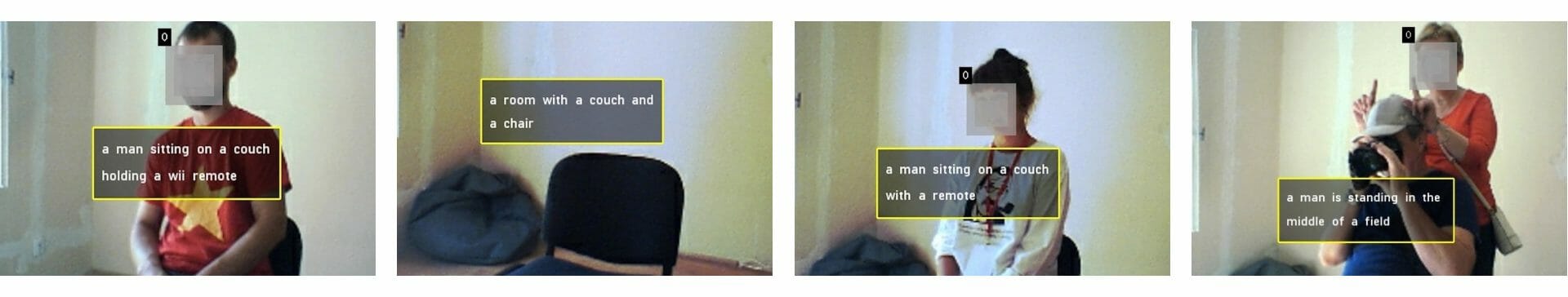

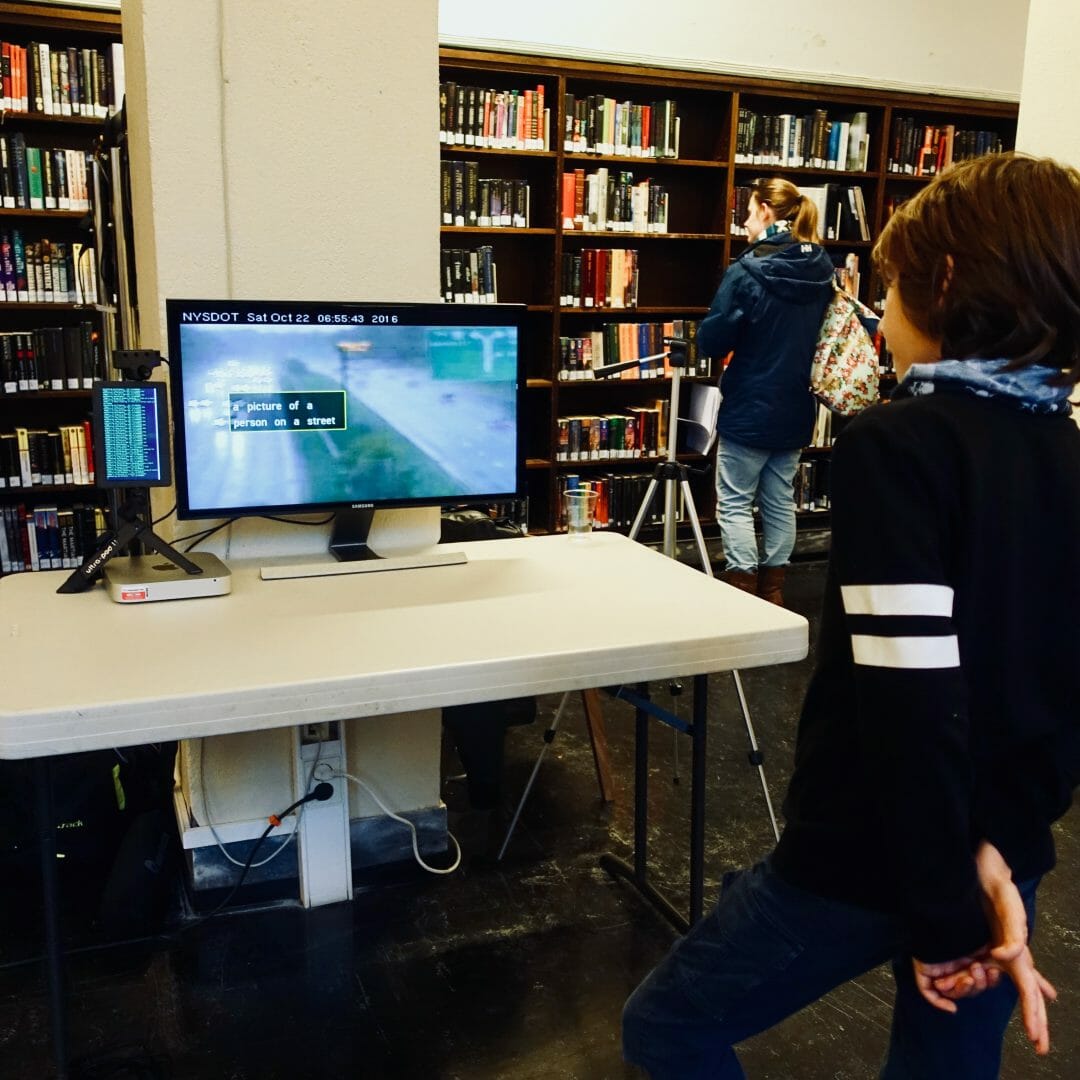

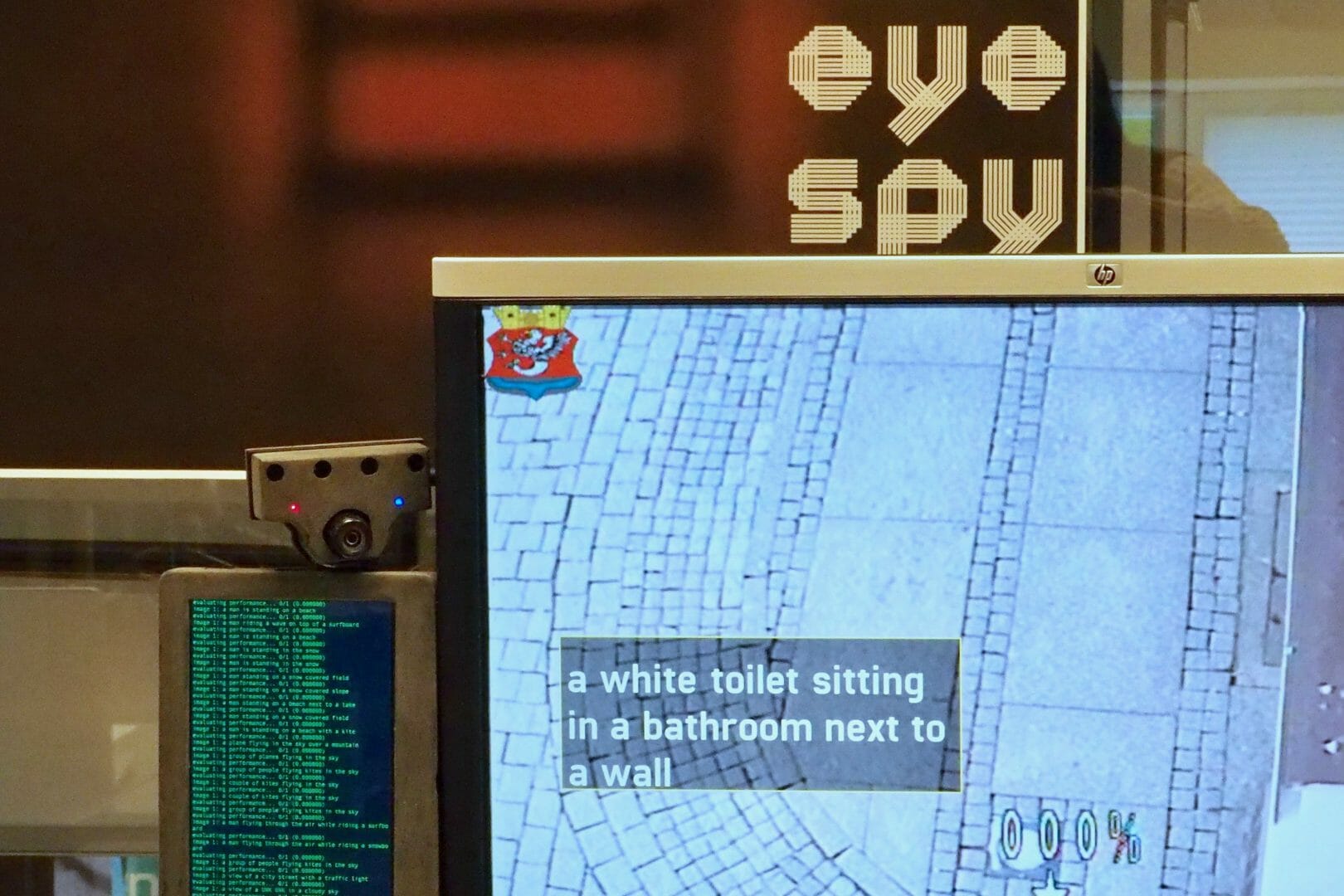

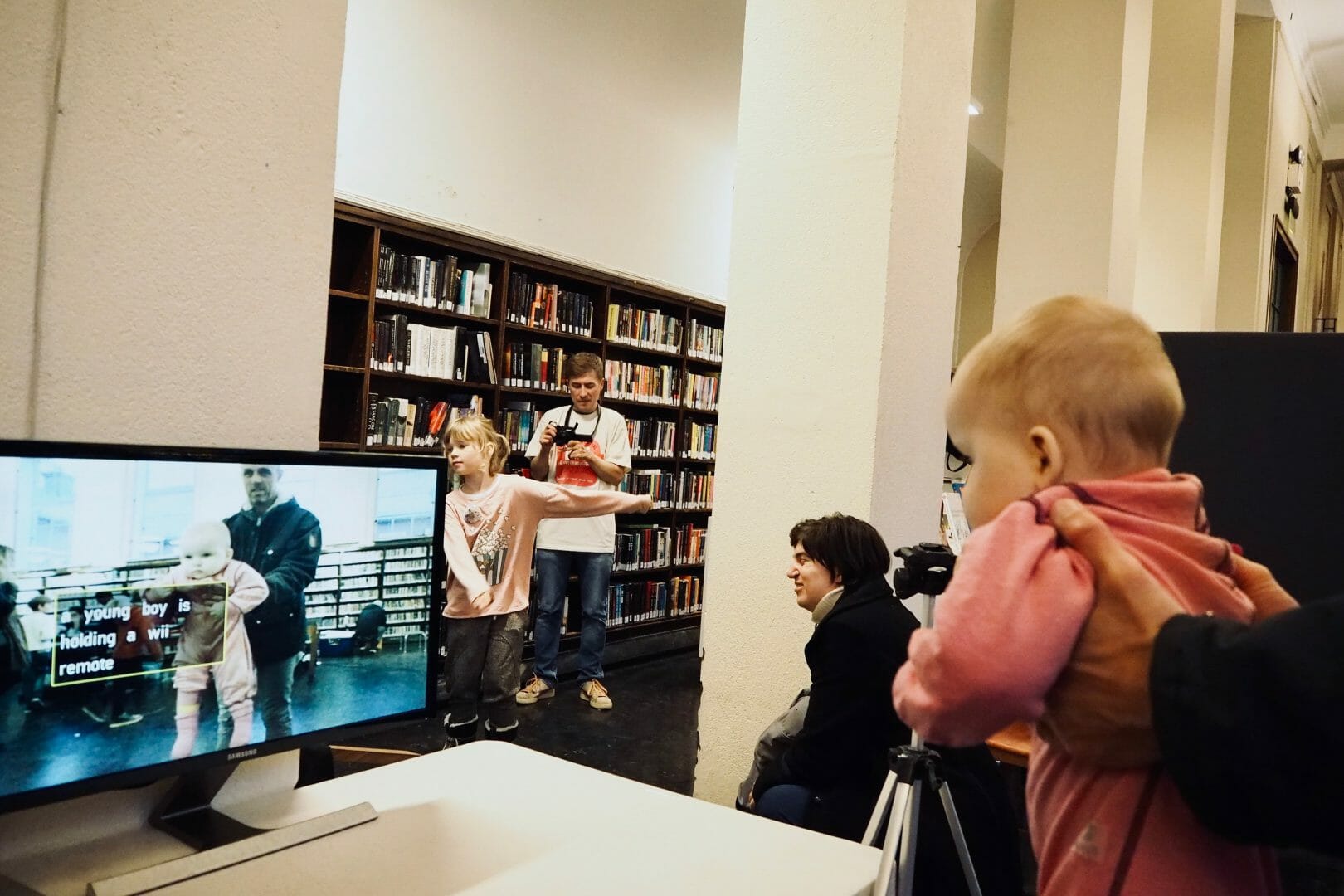

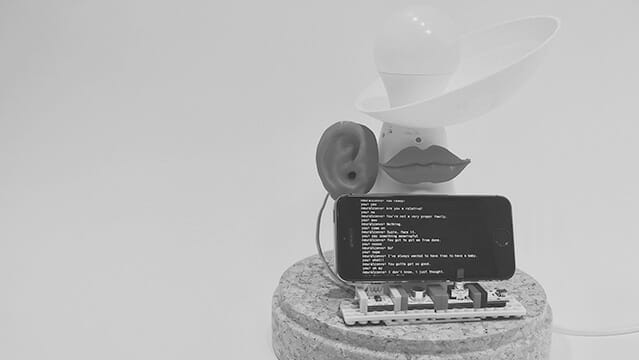

Examples of the installation audience being interpreted by Eyespy at Grassomania 8 in Gdansk

Eyespy

Eyespy conceptually flips the idea of a surveillance camera in a couple of ways:

- Normally, users view the feed from a surveillance camera remotely to monitor particular spaces when they are not there. Since Eyespy’s camera is pointed back at the viewer and kept next to the feed it displays, it can’t be used for deliberately monitoring a particular place.

- It brings into focus the ordinary and mundane data that smart cameras continuously capture and algorithmically interpret. This is in contrast to feeds from surveillance cameras that mainly highlight things that are out of the ordinary.

By combining and interpreting global camera feeds that have no personal connection to the viewers, it conceptually changes the interaction from monitoring a feed to watching it. The act of watching helps create a recreational and social experience, like that of changing channels on a television or browsing through social media. By bringing the combined interpreted feed into focus, and physically locating it next to the camera, Eyespy reframes the interaction from passive observation and interpretation by machines to an active, physically present, and to an extent, social involvement.

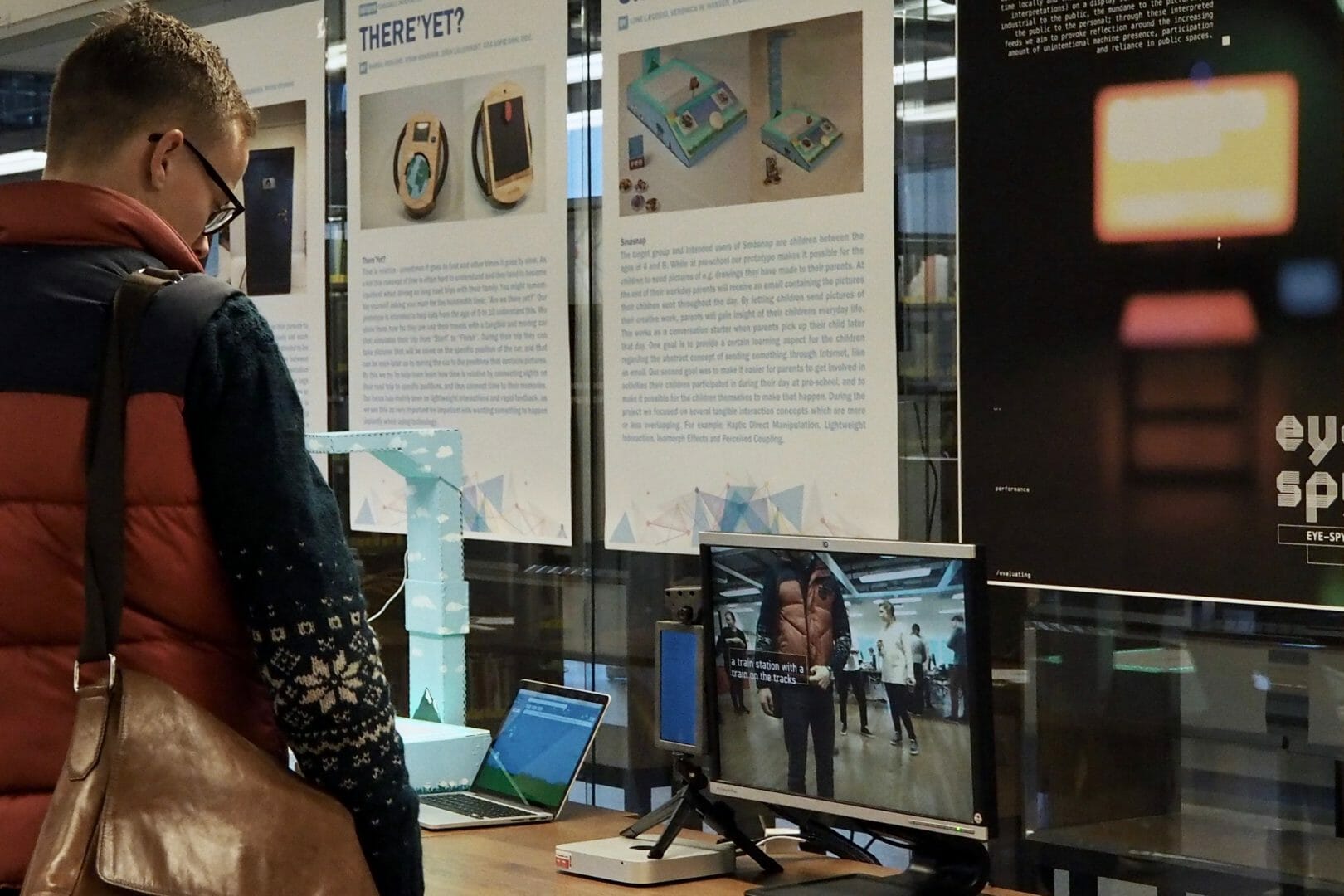

Eyespy being exhibited at Dagen@IFI 2016

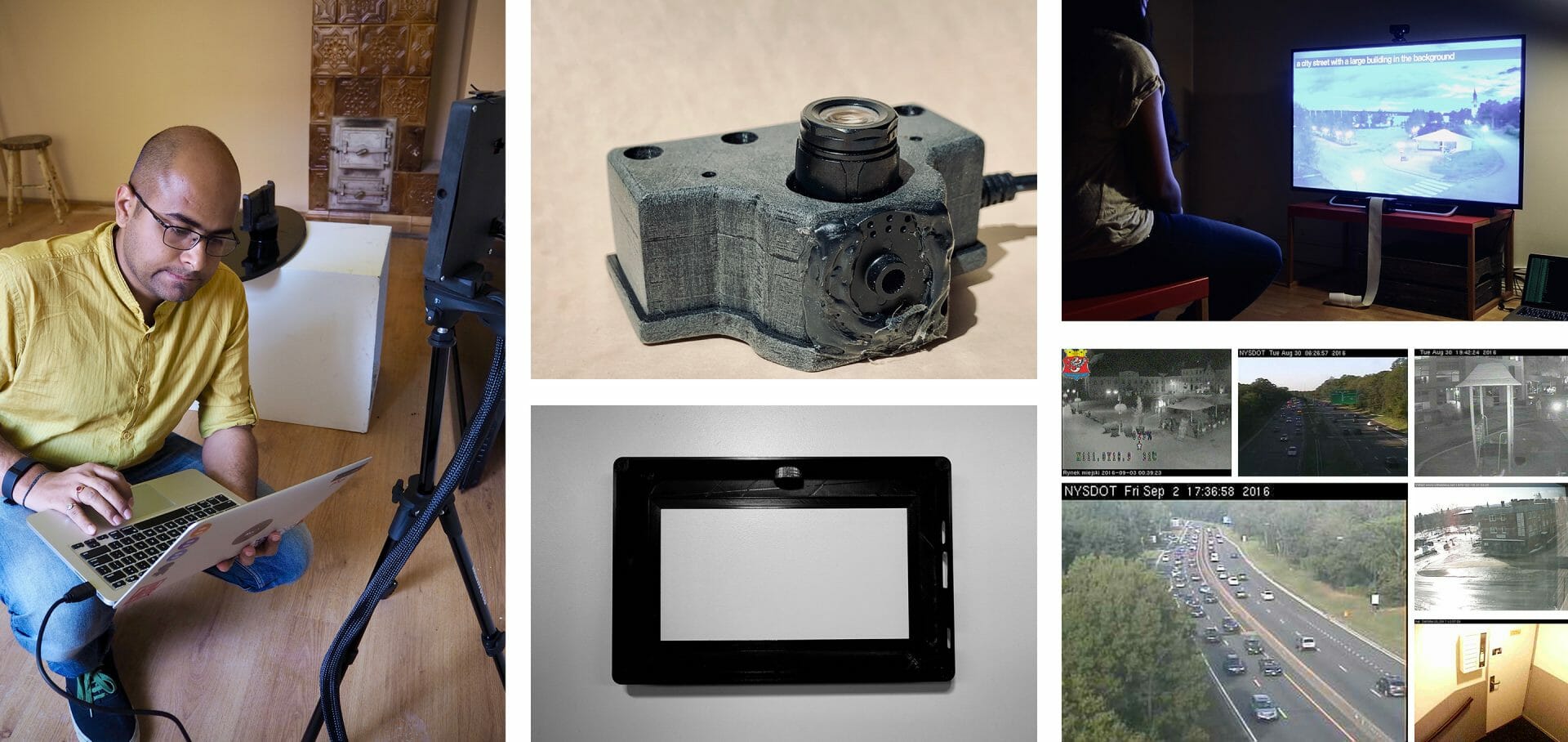

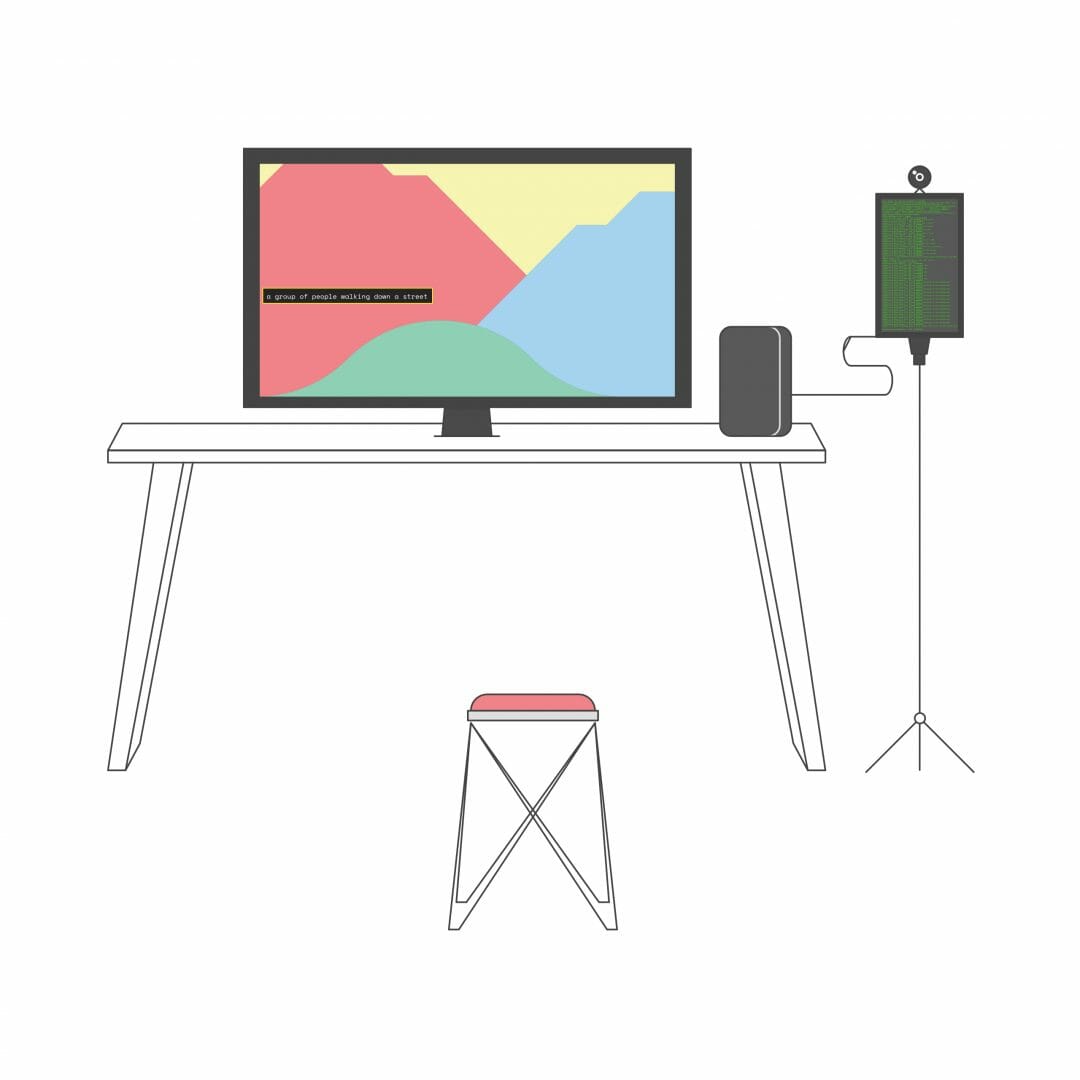

Setup

Grassomania 8: Cultural Festival

In Gdansk, I set Eyespy up in a living room style arrangement, with relaxed seating and a flat-screen television. In this setting, the computer was hidden behind the screen. The only unusual element was the camera that was pointed back at the viewer and a second screen continuously showing raw output from the algorithm.

Oslo Maker faire

I tweaked the setup for subsequent events that were mainly focused on technology. I made all parts of the installation clearly visible and showcased Eyespy as a technological spectacle, like the products exhibited in conferences and trade shows such as the Consumer Electronics Show. But even though it was presented as a product, Eyespy seemed unusual here as well because it did not have a pre-defined utility or useful function.

In both cases, the mix of the familiar with the unfamiliar in different ways was intended to make the concept feel probable and familiar, while also challenging preconceptions by adding an element of surprise and strangeness to the overall experience.

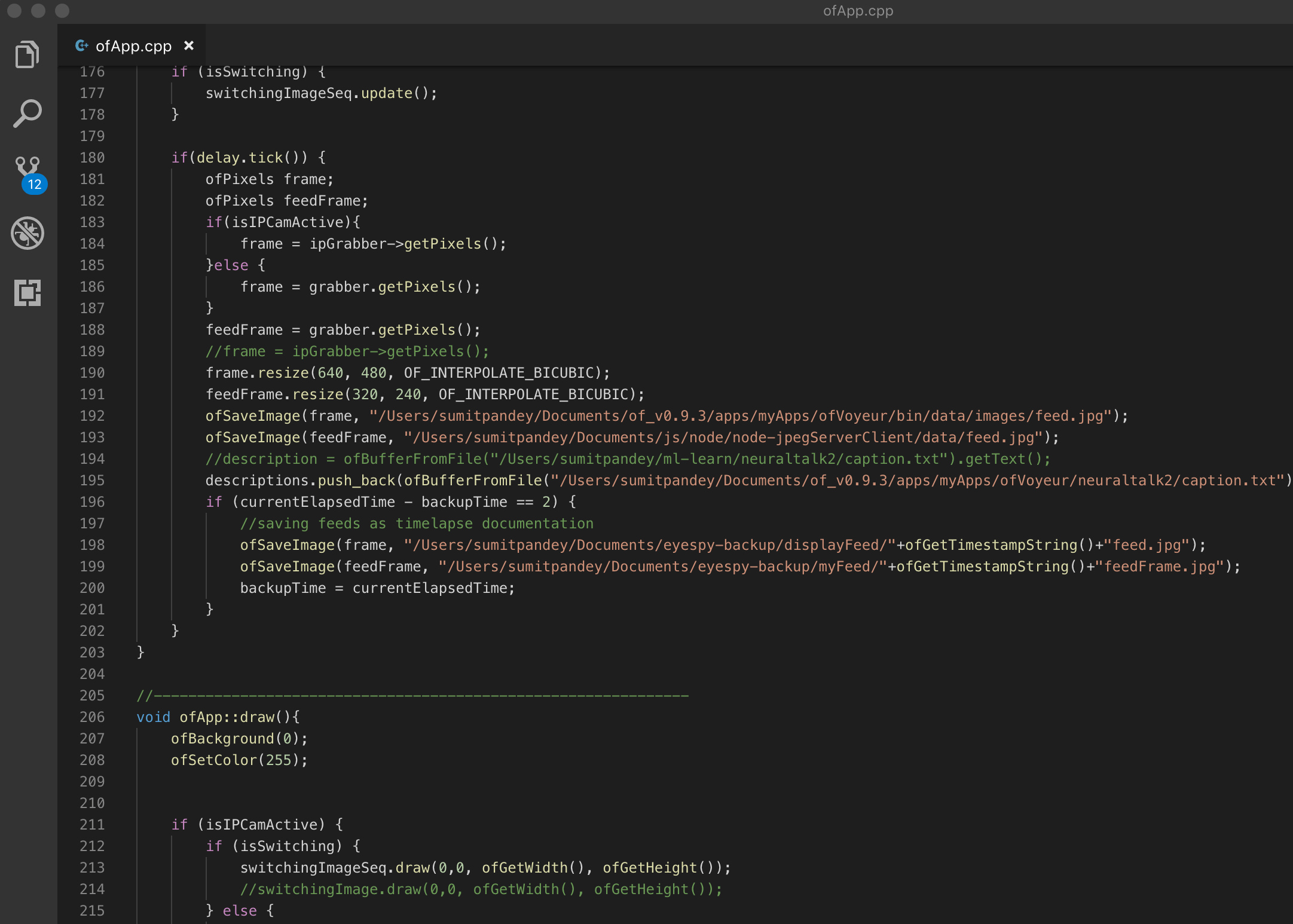

How it works

To implement the finalized design concept, I created a list of surveillance camera feed URLs by searching for open camera feeds on Shodan, a search engine for smart technology, and customized queries on internet search platforms like Google and Bing. The camera feeds were captured and aggregated together locally in a bespoke software written in openFrameworks, a framework for creative coding. A textual interpretation of the activity in the aggregated feed was generated using the NeuralTalk2 machine learning algorithm. The generated text-based interpretation of the activity in the feed was then superimposed on top of the feed. The camera feeds were chosen at random and changed automatically at a regular interval. If a camera went offline, another camera, from a different location, took its place. As a result, the visuals shown to the viewers changed continuously and had no beginning or end, since they were captured continuously, in real-time.

Early tests with a prototype

Approach

Speculation and Sketching with Code

discovery and brainstorming

I began the project by creating a collage of media reports and research articles related to smart cameras, their use and breakdowns, and anxieties related to them. I used this collage as a mood board for thinking about questions, issues, and brainstorm concepts that could seem believable in such a media landscape. Believability was important because I felt that an overly dystopian idea could feel too detached from reality and improbable. With the concept, I wanted to create an experience that mixed the familiar with the strange, to challenge preconceptions and allow alternate understandings of technology to emerge.

I went through many phases of brainstorming, concept sketching, and scenario building before deciding on deconstructing and inverting the idea of a smart camera. Some themes I explored were –

01/

What if a smart surveillance camera could not monitor and interpret activities in a particular space? What if it monitored and interpreted the user and unsecured camera feeds from other cameras instead?

sketching with code

Besides sketching concepts, the process also involved sketching with code – tinkering with different machine learning algorithms to understand the different possibilities they presented for the concepts. This process was different from prototyping a concept sketch in code because here code became another medium through which I could explore and develop concepts.

From my experiments, I found that in the context of smart surveillance cameras, machine learning algorithms can broadly be used to –

- identify objects, people, and places (in an image or video)

- generate descriptions of the activities and interactions

As compared to identification, any description of an image or video is by its very nature ambiguous and imprecise. I felt ambiguity and imprecision would help viewers create their own narratives and reflect on the gaps in the machine interpretation of the visual feed from a surveillance camera.

02/

Snippet of the final installation’s code

Setting up Eyespy’s camera and secondary display

Exhibits

Grassomania 8, Gdansk, Poland

Dagen @ IFI 2016, Oslo, Norway

Skaperfestival 2016, Oslo, Norway

Publication

I discussed the Eyespy and EyespyTV design concepts and their process in a peer-reviewed research paper. The paper was presented in the NordiCHI 2018 conference in Oslo, Norway, together with my PhD advisor Prof. Alma Leora Culén.

Research Paper

Pandey, S., & Culén, A. L. (2018). Eyespy: Designing Counterfunctional Smart Surveillance Cameras. Proceedings of the 10th Nordic Conference on Human-Computer Interaction, 838–843.

More Projects

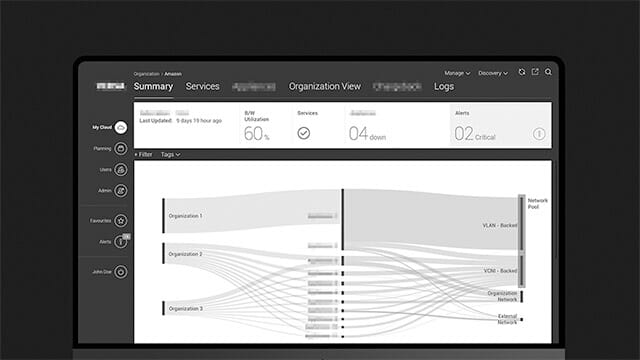

Versa Networks - Software Defined Network (SDN) Application0 to 1 product design for a SDN management application

D+H India - Transaction Banking App0 to 1 design of a responsive transaction banking app

LibraryUXIntroducing and Positioning Design for the University of Oslo Library

FriluxBranding Design at the University of Oslo Library

IBM Cloud Storage ManagementRe-designing the cloud object storage application to seamlessly manage and monitor large-scale storage clusters

EyespyTVProbing smart surveillance through a portable TV and cultural probes

HearsayA voice-enabled lamp that is ‘always in conversation’

TibloDesigning an open-ended and tangible learning aid for young children with dyslexia

NeuralA fictional technology magazine from 2025